Learning Lingo

In 2006 I was four years into my Master’s program in Adult Education at the University of Rhode Island and the end was in sight – just two more courses and a thesis. I had just resigned from the job I’d held for six years as a systems administrator and user support specialist at a small, Rhode Island-based research affiliate of the University of Massachusetts Medical School. I had gotten a new job supporting online training technologies at the mother ship, the UMMS campus in Worcester. I wanted to be an instructional designer (ID), and this position was a stepping stone in that direction. My new job brought with it an hour-long commute, an opportunity to apply the skills I was gaining in graduate school, and – as I learned on day one – a whole new vocabulary.

Here are some of the terms that were new to me at the time or that have confused me as I moved along in this buzzword-filled field. What terms would you add to the list?

01

2 Sigma Problem

02

70/20/10

While there is a lack of empirical evidence to support this breakdown, there are organizations, resources, and even an online community devoted to the model.

Image Source: http://tom.spiglanin.com/2014/12/i-believe-in-the-702010-framework/

03

Analysis

-

- An audience analysis, or learner analysis, provides insight into the preferences, motivations, contexts, knowledge level, etc.

- A needs analysis (aka needs assessment) reveals the learning needs of a particular audience regarding a given subject matter.

- A task analysis produces a step-by-step, detailed description of how a task is performed.

- A gap analysis identifies discrepancies between how something is (for example, how a task is performed) and how it should be (for example, how the task should be performed for best results).

04

Analytics

05

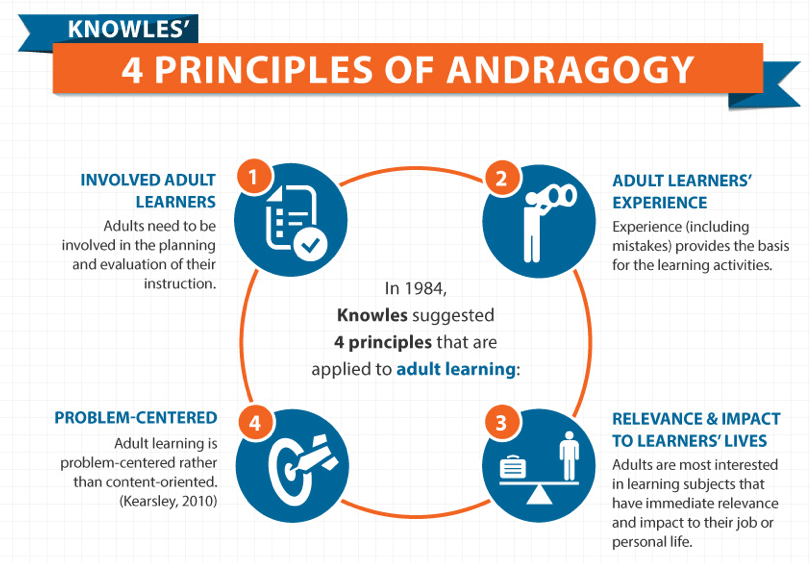

Andragogy/Pedagogy

You may read or hear that pedagogy means the science of teaching children while andragogy is the science of teaching adults. While that is technically true, the word pedagogy is often used in a more generic sense to mean the art and science of teaching, period. Andragogy as a term was popularized by Malcolm Knowles and is associated with his principles of adult learning, which emphasizes that adults learn best when:

- they are involved in planning the learning

- can learn through experiences

- are learning topics that they can immediately apply to their lives or work, and

- are provided problems to solve rather than just content to learn.

06

Asynchronous Learning

07

Authentic Assessment

Authentic assessment means having learners complete tasks that mirror what they’ll need to do in the real world. For example, if nurses will need to be able to interview patients to obtain a medical history, an authentic assessment would be having them practice under observation with patients (or people acting as such) or completing simulated conversations online. A multiple choice quiz about interviewing techniques or dragging and dropping the components of a medical history would not be considered authentic assessments, but these types of evaluations can still be important as learners build foundational skills.

08

Backwards Design

Start with the measurable goals or learning objectives, and plan backwards:

Start with the measurable goals or learning objectives, and plan backwards:

- What is the desired result? What should a learner know (or be able to do) by the end of a course or module?

- How will these outcomes be measured? What assessments will indicate success?

- What knowledge or skill does a user need to reach these goals? Design learning activities and experiences based on the above.

Note from Bonnie: Backwards Design comes from McTighe and Wiggins‘ Understanding by Design (UbD) framework. At Course Kitchen, we add a step before the first one listed above. Before identifying the desired result, we need to understand the learner. An audience analysis resulting in a designer’s empathy for the learner can go a long way toward producing the right learning experience.[

09

Blended Learning

Yes, this was a real cake presented to Bonnie and team at a real celebration for the launch the first blended learning program developed at a global financial services company back in 2008.

10

Branching

11

Chunking

This is the process of dividing content into manageable sections aligned with particular objectives. For example, if a designer is working on a project to teach people how to drive, they might divide, or chunk, the material to be learned into categories such as Readiness, Operation, Traffic, and Laws. Since each category is still quite broad, they may be further chunked such that Readiness includes “Checking Mirrors”, “Seat Adjustment”, “Safety Measures”, and “Assessing the Area”. Each of these chunks might be taught and tested individually before moving on to the next. In this way, complex or dense subject matter (“content”) can be systematically taught and assessed in an accessible manner. There are several strategies for chunking according to cognitive domain or other classifications. An expert designer will choose the approach that best aligns with the content and objectives.

Image Source: http://theelearningcoach.com/elearning_design/chunking-information/

12

Collaborative Design

Beyond leveraging each skill set for various aspects of the process, true collaborative design solicits the regular input and validation of all involved.

One model of collaborative design builds on ADDIE to accommodate contributions from a full range of stakeholders: the 5 D’s (Discovery, Design, Development, Delivery, and Debrief). Under the skilled leadership of an expert designer, this model fosters open and trusting partnerships within cross-functional design team of widely varying expertise.

13

Design Thinking

-

- Whether it is referred to as analysis or discovery, the work always starts with attempting to understand and empathize with the needs of the learner and organization.

- Interpretation of, ideation around, and experimentation with the results of the analysis is the heart of the design and development process. This cycle involves iterative rounds of prototyping, reviews and user testing, and modifications.

- Evolution is ultimately the result of testing and evaluation; the purpose of measuring the efficacy of a learning experience is to make continuous improvements.

14

Formative/Summative Assessments

If you wait until the end of a learning experience to evaluate participants on what they’ve learned, that’s summative assessment. And it’s valuable. But it’s also valuable to include some low-stakes formative assessments along the way so that participants can know how well they’re learning and teachers can know how well they’re teaching and both groups can adjust accordingly.

15

Hybrid Learning

16

Informal Learning

Informal learning encompasses all the ways we learn outside of a formal learning experience. It’s usually unplanned and often impromptu. Have you ever been stuck and asked your colleague for help? Or learned a new way of doing something by watching a YouTube video? That’s all informal learning. Organizations are becoming more interested in informal learning because it accounts for so much of how we learn. However, its very nature makes it difficult to track or evaluate. For more on informal learning, see the work of Jay Cross.

17

Instructional Interactivity

The most interactive learning experiences explicitly engage the learner in a process of decision making and feedback. The primary model for such instructional activity was developed by Michael Allen and is referred to as CCAF. In this sequence, a learner is provided with a Context in which to apply learning with an urgent Challenge and an Activity in which to respond to the challenge. Feedback is given to enforce the learning.

The level of interactivity selected for certain elements within a learning experience, or for the experience as a whole, should be determined by a careful needs analysis conducted by an experienced online learning designer. Higher interactivity is not necessarily better, as some learning objectives can be accomplished most effectively without interactivity, via a simple text page or video. A learning designer can help match each learning objective with a strategy to meet the goal in the most effective, efficient and engaging manner.

Image Sources: https://bonlinelearning.com.au/blog/are-we-there-yet-determining-elearning-development-time/; http://www.alleninteractions.com/elearning-instructional-design-ccaf

18

Kirkpatrick

| Level | Value | Timing | Participation |

| 1 – ReactionDid they like the experience? | Learner’s reactions to: content, relevancy, format, methods etc. | Near the end of the experience | Representative sample of the learners |

| 2 – LearningDid they learn what you wanted them to learn? | Learner’s acquisition of knowledge and skills at the end of the learning experience. | Pre and/or post experience, most useful for experiences aiming to equip participants with a certain level of skill or knowledge | All learners |

| 3 – BehaviorHas their behavior or performance changed as a result of what they learned? | Learner’s sustained behavior changes outside of the learning experience | Post-experience for each learner, only useful in conjunction with the data above | All learners and, when possible, their supervisors |

| 4 – ResultsDid the experience achieve the desired results for the organization? | Cost benefit analysis to the organization | Post-experience for all learners, only useful in conjunction with the data above | Supervisors |

19

Learning Management System (LMS)

A Learning Management System (LMS) is a hub of educational activity in a software platform. It presents and stores curriculum and all records of student progress and may be used may be used for online, hybrid, and face-to-face education and training environments. For the learner, learners can access their courses, submit work, track progress, and interact with other users within a course. From the teacher, instructors use the LMS to deliver course materials, administer tests, and view learner activity within a course, including group discussions, assessments, even monitor how much time students actively spend on course content.

Bonnie’s Note: There is a longstanding debate in academia about the merits of a learning management system. Does such a system focus on management over and above learning? In today’s open ed tech landscape, is a consolidated platform necessary for effective collaborative learning? While LMS providers like Blackboard and Canvas aren’t likely to disappear any time soon, thought leaders such as Clark Quinn and Tony Bates have been provoking important conversation about the current nature of learning.

20

Learning Styles

21

Levels of Evaluation

22

Modality

23

Multi-modal

24

Objectives

Image Source: http://www.celt.iastate.edu/wp-content/uploads/2015/09/RevisedBloomsHandout-1.pdf

25

Platform

Selling platforms, learning platforms, software platforms, cloud-based platforms, mobile platforms, and of course, platform shoes. What is a platform? At its most basic, it is a place on which to build or produce. In instructional design contexts, it is simply the “how” and “where” information and materials are presented: an LMS, a physical classroom, social media, a learning app that integrates with social media.

26

SCORM/Tin-Can API

When I first became interested in instructional design, SCORM intimidated me. I knew it had something to do with technical specifications, but not much else. Shareable Content Object Reference Model (SCORM) refers to technical standards that allow online educational content to work with Learning Management Systems (LMS). SCORM creates a nice smooth road for online content to travel to computer based systems that house and make the content available. With the development of mobile learning, another set of standards is emerging called Tin Can API or Experience API (xAPI). While some say that Tin Can will replace SCORM, they don’t really do the same thing. [

When I first became interested in instructional design, SCORM intimidated me. I knew it had something to do with technical specifications, but not much else. Shareable Content Object Reference Model (SCORM) refers to technical standards that allow online educational content to work with Learning Management Systems (LMS). SCORM creates a nice smooth road for online content to travel to computer based systems that house and make the content available. With the development of mobile learning, another set of standards is emerging called Tin Can API or Experience API (xAPI). While some say that Tin Can will replace SCORM, they don’t really do the same thing. [

27

Self-Paced Learning

28

Smile Sheets

Assessing the effect of learning on participants is key; too often this is limited to a quick post-experience survey. These “smile sheet” surveys are problematic because they only tell us how participants feel about the experience (Level One on the Kirkpatrick scale). They don’t allow us to measure how much learning actually took place, and whether the experience resulted in any new behavior or performance improvements.

Dr. Will Thalheimer presents a research-based approach to more effective “smile sheets” in his book, Performance-Focused Smile Sheets.

29

Synchronous Learning

30

Universal Design for Learning (UDL)

This term can be confusing since many people think it relates only to making content accessible for those with disabilities or learning challenges. According to CAST, a nonprofit group that has supported and promoted UDL since the 1980’s, it is a “research-based set of principles to guide the design of learning environments that are accessible and effective for all”. While originally developed to utilize computer technology to support students with disabilities in the K-12 classroom, the principles and guidelines are now also used in higher education and workplace training. The UDL framework consists of guidelines to provide Multiple Means of Engagement, Representation, and Action & Expression that make learning accessible to anyone, anywhere. Click on the image below for details.

UDL Guidelines graphic organizer developed by the National Center on Universal Design for Learning